Introducing the Intel® RealSense™ D400 Product Family

By Anders Grunnet-Jepsen, John N. Sweetser, John Woodfill

“Depth cameras” are cameras that are able to capture the world as a human perceives it – in both color and “range.” The ability to measure the distance (aka “range” or “depth”) to any given point in a scene is the essential feature that distinguishes a depth camera from a conventional 2D camera.

Humans have two eyes that see the world in color, and a brain that fuses the information from both these images to form the perception of color and depth in something called stereoscopic depth sensing. This allows humans to better understand volume, shapes, objects, and space and to move around in a 3D world.

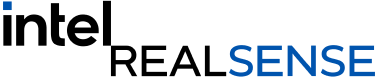

Figure 1. The output of a depth camera. Left: Color Image of a cardboard box on a black carpet. Right: Depth map with faux color depicting the range to the object

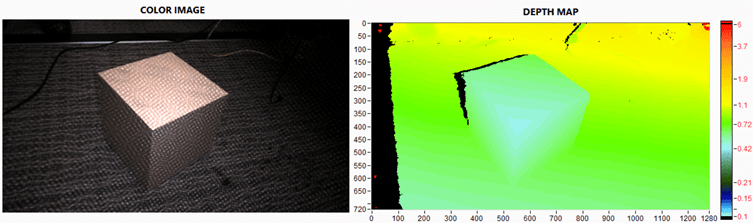

Figure 2. The 3D Point Cloud of the box captured in Fig 1. Left shows 3D mesh without color texture, and right shows the same scene with the image color texture applied. The top and bottom are different views seen by rotating the point-of-view. Note that this is a single capture, and not simply photos taken from different viewpoints. The black shadow is the information missing due to occlusions when the photo was taken, i.e. the back of the box is of course not visible.

Intel® RealSense™ Stereo Depth Camera D400 Family

Intel has been developing depth sensing solution based on almost every variant of depth sensing technology for several years, and all technologies have their technological tradeoffs. Of all the depth sensing technologies, stereo vision is arguably the most versatile and robust to handle the large variety of usages. While one could be tempted to think that stereoscopic vision is “old-school” technology, it turns out that many of the challenges faced by stereo depth sensing in the past have only now been overcome – algorithms were not good enough and prone to error, computing the depth was too slow and costly, and the systems were not stable over time.

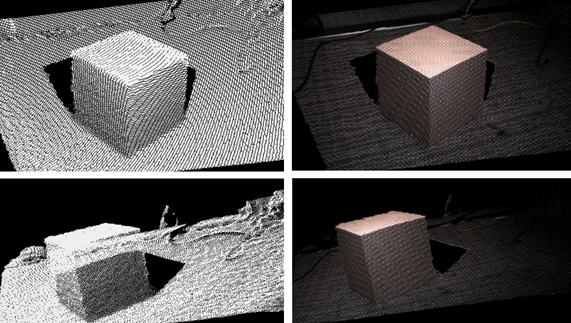

Intel has greatly accelerated computing stereo depth by creating custom silicon for depth sensing, the Intel RealSense Vision Processor D4 and D4m, that can achieve calculation speeds of over 36 Million depth points/second using a customized variant of a Semi Global Matching algorithm, achieving frame rates of >90fps, using less than 22nW/depth-point in a chip package size of 6.3×6.3mm (which is a fraction of that of the Intel® Core™ i7 processor), and searching across 128 disparities. The goal was to enable computer stereo vision to achieve performance levels (power, thermals, resolution, frame rate, size, cost etc.) needed for embedding in small consumer electronic devices such as mobile phones, drones, robots, and surveillance. With this goal in mind, Intel launched its 2nd Generation ASIC, the Intel RealSense Vision Processor D4, that shows great improvements especially in environments where the projected light of any active system (assisted stereo, structured, or ToF) would be washed out or absorbed, outside or on dark carpets that tend to absorb IR light, as seen in Fig 3.

Figure 3. Example scenes comparing the previous generation LR200 Module vision processor performance with the new D410 Module and vision processor showing the vast improvement in one chip generation.

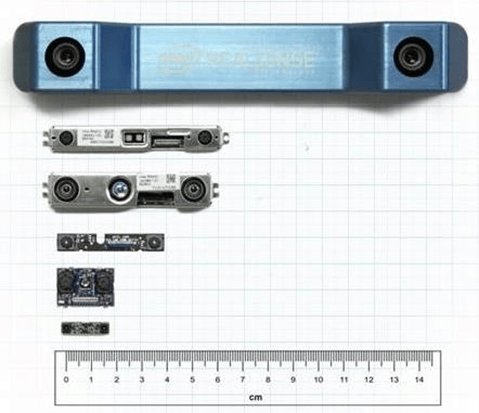

The Intel RealSense D400 Product Family provides additional flexibility of design for stereoscopic systems. Fig 4 shows an example of a few different designs of depth sensors that all use the same Intel RealSense™ Vision Processor D4. Why are so many different designs used? The key is that performance can be optimized under different constraints because stereoscopic systems are extremely customizable and adaptable and can benefit from a large optical industry of COTS. This is also very important for industrial designs considerations. For example, if long range and high quality are paramount and the product is less sensitive to price, it will be possible to use higher performance CMOS sensors (higher dynamic range, more sensitivity and higher quality) as well as better and larger optics so that the input images are of high quality. In the other extreme where cost and size are critical, it is possible to use small baseline, cheap consumer-grade CMOS sensors, and plastic optics.

Figure 4. A collection of Intel® RealSense™ D400 camera modules that all use the same ASIC.

In general the main design parameters are 1. Baseline, 2. CMOS selection (resolution, global vs rolling shutter, HDR, color vs grayscale), 3. Field-of-View (FOV), and 4. Quality of lenses. Intel has also provided calibrated depth modules configured with specific design parameters that we believe will meet a broad set of use cases. Additionally, we provide 2 cameras D415 and D435 that are ready to use Depth Cameras for developers to quickly evaluate this new technology. This product family is a great candidate for fitting the multiple spatial constraints and performance requirements, and is in general extremely flexible to design modification that can adapt it to a design space of size, power, cost, and performance.

See specifications and more info on Intel RealSense D400 Series Cameras.

Subscribe here to get blog and news updates.

You may also be interested in

“Intel RealSense acts as the eyes of the system, feeding real-world data to the AI brain that powers the MR

In a three-dimensional world, we still spend much of our time creating and consuming two-dimensional content. Most of the screens