How-to: Multiple camera setup with ROS

Intel® RealSense™ D400 series depth cameras use stereo-based algorithms to calculate depth. One key advantage of stereo depth systems is the ability to use as many cameras as you want to within a specific scene. In this post, we are going to cover creating a unified point cloud with multiple cameras using ROS.

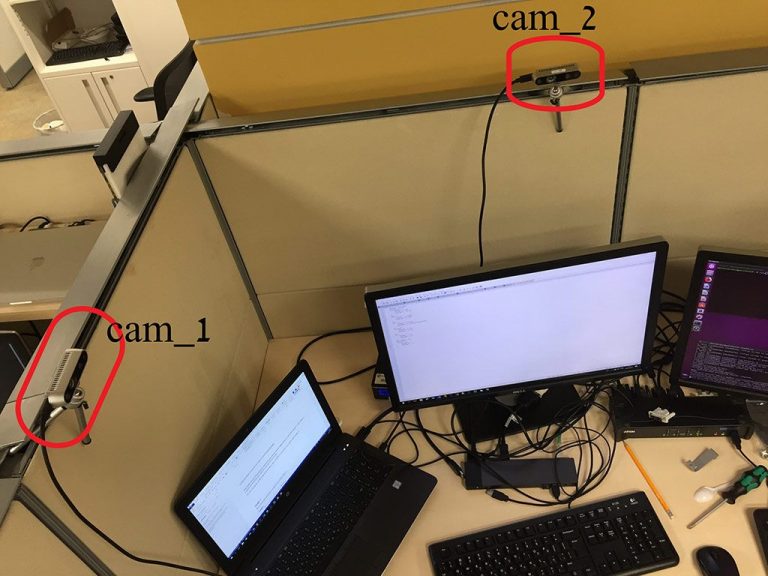

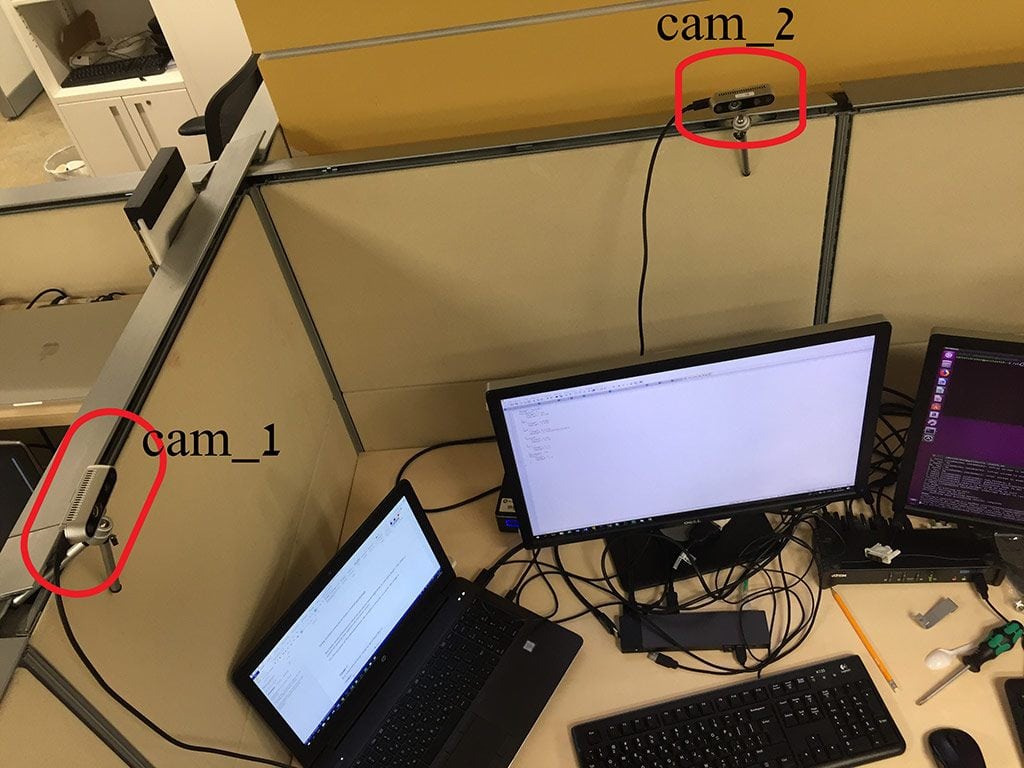

For the initial demonstration, we set up two Intel® RealSense™ D435 cameras looking at the same area from two different points of view.

The cameras themselves have no data regarding their relative position. In Step 3, we’ll use a 3rd party program to set this up.

Step 0: Ensure you have your environment set up.

Install the Intel RealSense SDK 2.0 (librealsense) for Linux* following the instructions here.

Install the ROS (not ROS 2) wrapper for librealsense from here. It is recommended to follow this set of instructions for the installation.

Step 1: Obtaining the camera serial numbers.

If you already know each camera’s serial number you can skip this step.

Otherwise,

1. Open terminal and change directory to catkin_ws

2. Make sure only cam_1 is connected and start the realsense2_camera wrapper:

roslaunch realsense2_camera rs_camera.launch

Note the serial number it finds in the following log line:

[ INFO] [1538983051.813336379]: setupDevice... [ INFO] [1538983051.813399792]: JSON file is not provided [ INFO] [1538983051.813427820]: ROS Node Namespace: camera [ INFO] [1538983051.813442869]: Device Name: Intel RealSense D435 [ INFO] [1538983051.813605893]: Device Serial No: 728312070349 [ INFO] [1538983051.813622583]: Device FW version: 05.10.03.00

The serial number in this case is 728312070349.

Record this somewhere and terminate the node using CTRL+C.

Repeat the step with now only cam_2 connected.

Step 2: Start both cameras.

Open 2 terminal windows (and change directory to catkin_ws directory).

In terminal 1 enter the following (don’t forget to replace 728312070349 with the cam_1 serial number you recorded in Step 1):

roslaunch realsense2_camera rs_camera.launch camera:=cam_1 serial_no:=728312070349 filters:=spatial,temporal,pointcloud_

In terminal 2 enter the following (again, fill in the correct serial number for cam_2):

roslaunch realsense2_camera rs_camera.launch camera:=cam_2 serial_no:=<serial_number_2> filters:=spatial,temporal,pointcloud_

We now have two cameras running with their own point clouds.

Step 3: Publish spatial connection between cameras.

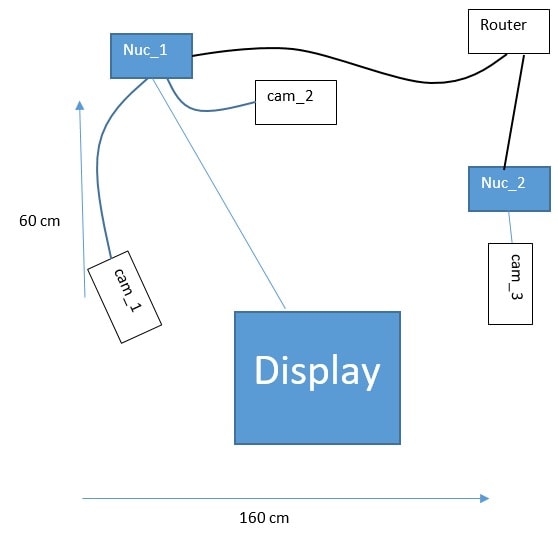

We estimate the translation of cam_2 from cam_1 at 70(cm) on X-axis and 60(cm) on Y-axis. We also estimate the yaw angle of cam_2 relative to cam_1 as 90(degrees) clockwise – this based on our knowledge of the setup in the image above. To simplify things, the coordinate system of cam_1 will serves as the coordinate system for the whole scene. These are the initial parameters we set for the transformation between the 2 cameras.

The following script calculates the transformation between the 2 cameras from the given parameters and publish the frame transformation.

Open a third terminal window – terminal 3 – and enter the following:

cd catkin_ws python src/realsense/realsense2_camera/scripts/set_cams_transforms.py cam_1_link cam_2_link 0.7 0.6 0 -90 0 0

Step 4: Visualizing the point clouds and fine-tune the camera calibration.

Open a fourth terminal – terminal 4 – and run RViz. RViz is a 3D visualizer for displaying sensor data and state information from ROS:

rviz

In the RViz window, do the following:

1. Set “Fixed Frame” to “cam_1_link” 2. Add -> By topic -> /cam_1/depth/color/points/PointCloud2 3. Add -> By topic -> /cam_2/depth/color/points/PointCloud2

Now both point clouds are shown together. If you looked closely it’s easy to see that the clouds are not exactly in position. Some features are duplicated. Now it’s time for fine calibration.

Switch to terminal 3, where set_cams_transforms.py is still running. Use it to fine-calibrate cam_2 relative to cam_1. The instructions are printed by the program:

Using default file /home/doronhi/catkin_ws/src/realsense/realsense2_camera/scripts/_set_cams_info_file.txt Use given initial values. Press the following keys to change mode: x, y, z, (a)zimuth, (p)itch, (r)oll For each mode, press 6 to increase by step and 4 to decrease Press + to multiply step by 2 or - to divide Press Q to quit

Below that a constantly updating line is printed, showing the current mode, value and step size after every key stroke.

Notice, that the program prints the path of the current configuration file. It saves its last configuration automatically, all the time, to be used on the next run. If you have been running the program for a while, and want to back up the configuration file, just copy it aside. Read the rviz manual, by running without any parameters to learn more about changing the configuration filename.

There is no question that it would be much better to find the precise matrix between the cameras automatically. In the meanwhile, after a lot of fiddling around, I was relatively satisfied with the following numbers:

- Parameter

- Value

- x

- 0.75

- y

- 0.575

- z

- 0

- azimuth

- -91.25

- pitch

- 0.75

- roll

- 0

The result is a more complete cover of the scene:

Adding more cameras

There are a couple of reasons why the number of cameras connected to a single computer is limited: CPU usage, USB bandwidth, power supply and also, the length of the USB cable. These are all covered in length in the Multiple camera configuration white paper.

In order to demonstrate the scenario we created the following array:

Both cam_2 and cam_3 are visible in cam_1’s point cloud. That way, it’s easier, though not trivial, to use set_cams_transforms.py to fix their location in cam_1’s coordinate system.

While using multiple computers we have to specifically set the roscore host. In this demo, the first command in each terminal is:

export ROS_MASTER_URI=http://<ros host ip address>:<port>

In this demo, the roscore, cam_1 and cam_2 run on perclnx319 and cam_3 is on perclnx217.

Also, in this demo all the terminals are being opened on roscore’s computer, perclnx319.

The commands to run are as follows – but remember to replace the serial numbers with your own.

Terminal 1

roscore

Terminal 2

export ROS_MASTER_URI=http://perclnx319:11311 roslaunch realsense2_camera rs_camera.launch camera:=cam_1 serial_no:=728312070349 filters:=spatial,temporal,pointcloud

Terminal 3

export ROS_MASTER_URI=http://perclnx319:11311 roslaunch realsense2_camera rs_camera.launch camera:=cam_2 serial_no:=713612070696 filters:=spatial,temporal,pointcloud

Terminal 4 – Used to operate the second machine, perclnx217 in our case

ssh perclnx217 [Enter Pasword] export ROS_MASTER_URI=http://perclnx319:11311 roslaunch realsense2_camera rs_camera.launch camera:=cam_3 serial_no:=725112060487 filters:=spatial,temporal,pointcloud

Publish the spatial connection between cameras.

cam_1 is rotated about 30 degrees compared to the left side of the imaginary square created by the 3 cameras. This camera is our coordinate system.

We estimate the translation of cam_2 from cam_1 at 90(cm) on X-axis and 30(cm) on Y-axis. We also estimate the yaw angle of cam_2 at 120(degrees) clockwise, compared to cam_1. These are the initial parameters we set for the transformation between the 2 cameras.

As for cam_3, we estimate the translation on X-axis at 160(cm) and on Y-axis at -20(cm). We estimate the yaw at 170(degrees) counter-clockwise.

The following script calculates the transformation from the given parameters and publish the frame transform.

Terminal 5

cd catkin_ws export ROS_MASTER_URI=http://perclnx319:11311 python src/realsense/realsense2_camera/scripts/set_cams_transforms.py cam_1_link cam_2_link 0.9 0.3 0 -120 0 0 --file ‘_cams_set_info_1_to_2’

Press Q to Quit.

Now set transformation for cam_3:

python src/realsense/realsense2_camera/scripts/set_cams_transforms.py cam_1_link cam_3_link 1.6 -0.2 0 170 0 0 --file ‘_cams_set_info_1_to_3’

Since set_cams_transforms.py is not a ROS-package and you can’t run 2 nodes with the same name, we run one copy at a time.

From now on, the last values for the relative transformations are saved in the specified files. If you want to modify cam_2 extrinsics, type:

python src/realsense/realsense2_camera/scripts/set_cams_transforms.py cam_1_link cam_2_link --file ‘_cams_set_info_1_to_2’ To modify cam_3: python src/realsense/realsense2_camera/scripts/set_cams_transforms.py cam_1_link cam_3_link --file ‘_cams_set_info_1_to_3’

Notice: Use 2 different file names for saving the results for two different sets of cameras.

Visualizing the point clouds and fine-tuning the camera calibration

Terminal 6

Enter the following command: ‘ rviz ‘ In RViz, do the following:

1. Set “Fixed Frame” to “cam_1_link” 2. Add -> By topic -> /cam_1/depth/color/points/PointCloud2 3. Add -> By topic -> /cam_2/depth/color/points/PointCloud2 4. Add -> By topic -> /cam_3/depth/color/points/PointCloud2 5. Add -> By display type -> TF 6. In the “Displays” window under TF > Frames enable only cam_1_link, cam_2_link and cam_3_link. Just for clarity reasons.

Now three point clouds are shown together. It’s easy to see that the clouds are not exactly in position. Some features are duplicated. Now it’s time for fine calibration:

Switching to terminal 5, where set_cams_transforms.py is still running, we use it to fine calibrate cam_2 and cam_3 relative to cam_1.

The instruction are printed by the program:

Using file /home/doronhi/catkin_ws/_cams_set_info_1_to_3.txt read initial values from it. Press the following keys to change mode: x, y, z, (a)zimuth, (p)itch, (r)oll. For each mode, press 6 to increase by step and 4 to decrease. Press + to multiply step by 2 or - to divide. Press Q to quit

Without an automatic calibration tool it can be quite challenging to tune the cameras. Here are a couple of tips for calibration:

- Calibrate one camera at a time.

- Set cam_2 in the field view of cam_1. Keep in RViz display only the point cloud of cam_1 and the axis notation (TF) of cam_2. Now it’s possible to watch the axis notation of cam_2 and change its x,y,z parameters until it’s located right on top of the camera as seen in cam_1’s point cloud. You can even try and put some distinct object close by and directly in line of sight of cam_2 and try to modify its orientation parameters to align the axis image so it will point to the object as seen in cam_1’s point cloud.

- Now, display also the point cloud of cam_2. Put a board to be seen by both cameras. Modify cam_2’s parameters to rotate the board until it is aligned parallel to the board as seen from cam_1, then translate it, rotate again and so on, iteratively, until they are exactly aligned. Use the board to understand what parameter to modify and your face for fine tuning.

- Repeat step 2 and 3 for cam_3 as well.

Eventually, I was relatively satisfied with the following numbers.

For cam_2:

- Parameter

- Value

- x

- 0.95

- y

- 0.38125

- z

- -0.015625

- azimuth

- -104.25

- pitch

- 0

- roll

- -0.5

for cam_3:

- Parameter

- Value

- x

- 1.5875

- y

- -0.703125

- z

- -0.05

- azimuth

- 163

- pitch

- 1

- roll

- 1.25

No doubt, a SLAM based solution is better then the manual labor I’ve been through to get this calibration, both in time and results. Nevertheless, the final 3D-model I got looked like that:

Subscribe here to get blog and news updates.

You may also be interested in

“Intel RealSense acts as the eyes of the system, feeding real-world data to the AI brain that powers the MR

In a three-dimensional world, we still spend much of our time creating and consuming two-dimensional content. Most of the screens